Introduction

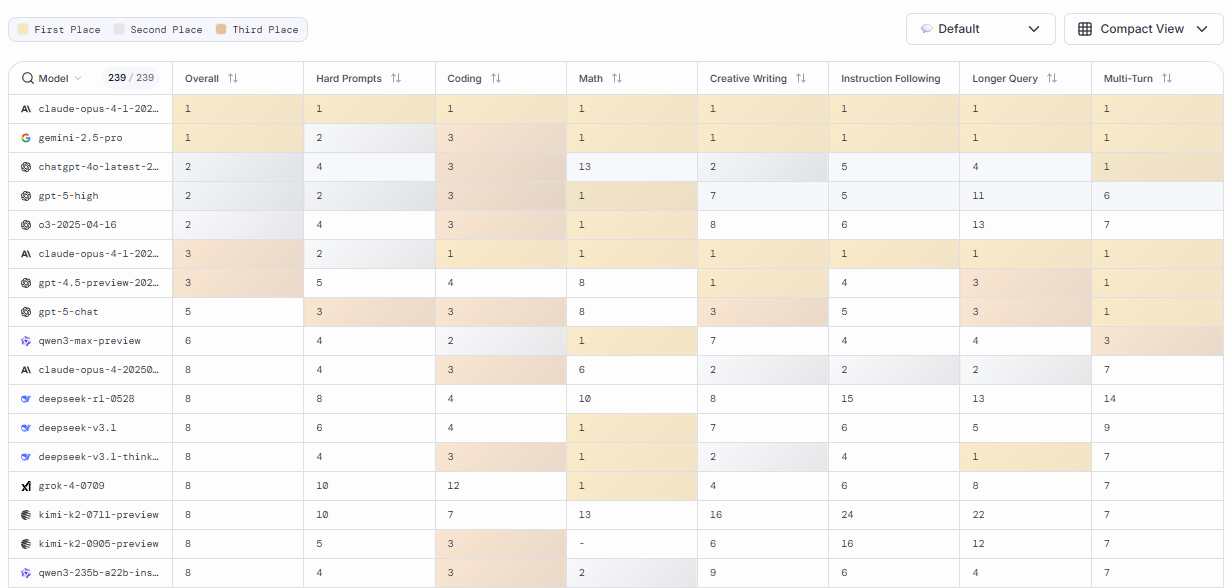

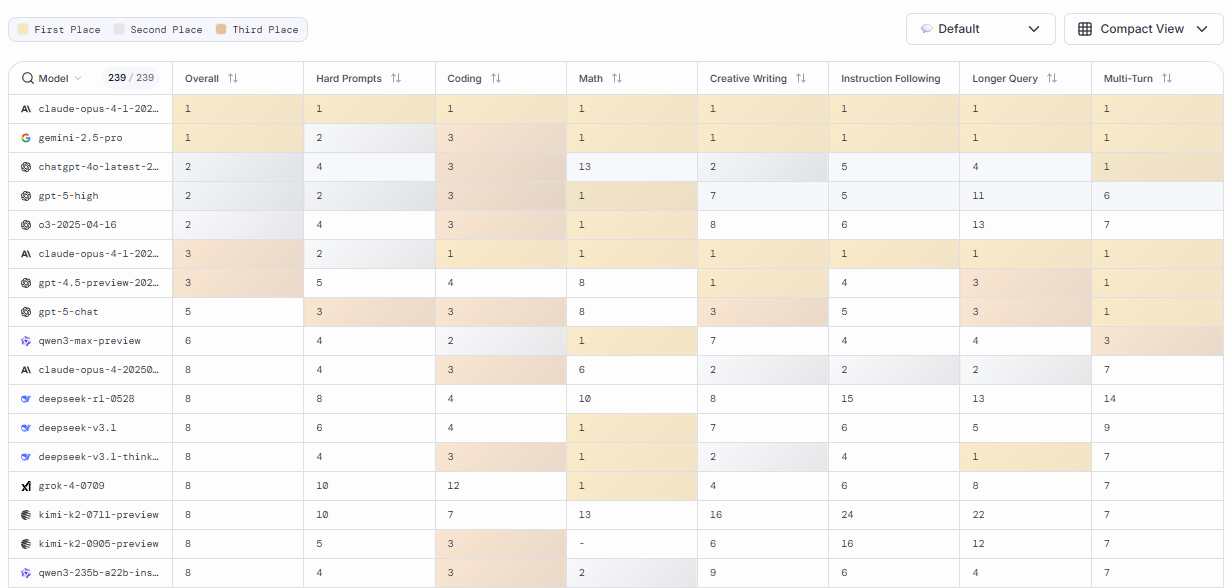

LMArena is an innovative platform designed to help businesses, developers and AI enthusiasts compare and evaluate different AI models side by side. Rather than being a tool for building AI systems, LMArena focuses on benchmarking and analysing model performance across key factors such as accuracy, response quality, speed and consistency.

This makes it especially useful for organisations that want to choose the right AI model for a specific use case without spending weeks testing multiple options manually. By providing structured comparisons and real time evaluation, LMArena removes guesswork and enables more informed decision making when selecting AI technologies.

Competitor Comparison

Here is how LMArena compares with other platforms used for AI evaluation and experimentation:

| Tool | Description |

|---|---|

| LMArena | Side by side AI model comparison with performance benchmarking |

| Hugging Face Spaces | Interactive demos and model experimentation platform |

| Papers With Code | Research benchmarking with datasets and performance tracking |

| EvalAI | Evaluation platform for AI competitions and benchmarking |

| OpenAI Playground | Testing environment for prompt experimentation |

| PromptLayer | Prompt tracking and performance monitoring for AI apps |

Compared with these tools, LMArena stands out by focusing specifically on direct model comparisons using consistent prompts, making it easier to evaluate which model performs best in real world scenarios.

Primary Users

The main users of LMArena include:

- Businesses evaluating AI models before implementation.

- Developers selecting the best model for applications such as chatbots or automation tools.

- AI researchers comparing model performance across benchmarks.

- Product teams testing different AI models for user experience and accuracy.

- Startups looking to reduce the time and cost of AI experimentation.

Difficulty Level

LMArena is categorised as Easy difficulty.

- The interface is intuitive and straightforward.

- Users can run comparisons without deep technical knowledge.

- No coding is required for basic evaluation tasks.

- More advanced analysis may require understanding of AI model behaviour and metrics.

Overall, it is accessible to both technical and non technical users.

Use Case Example

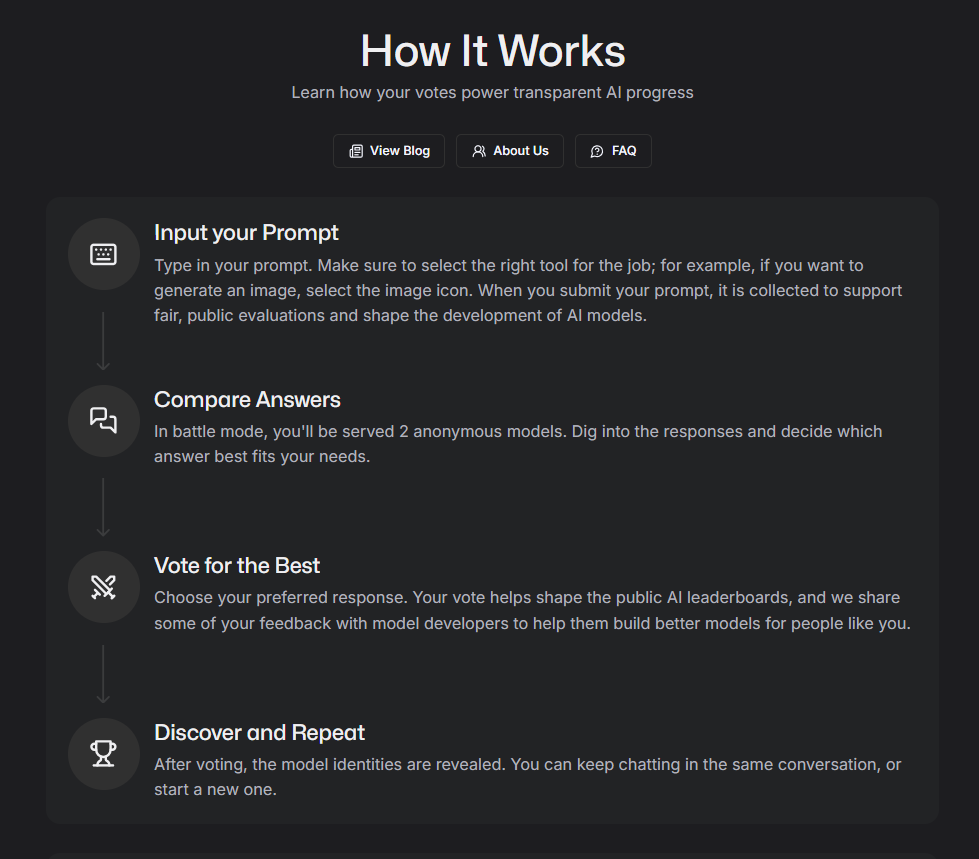

Here is a practical example of how LMArena can be used to select an AI model.

Task: A small e commerce business wants to implement an AI powered customer support assistant.

Steps:

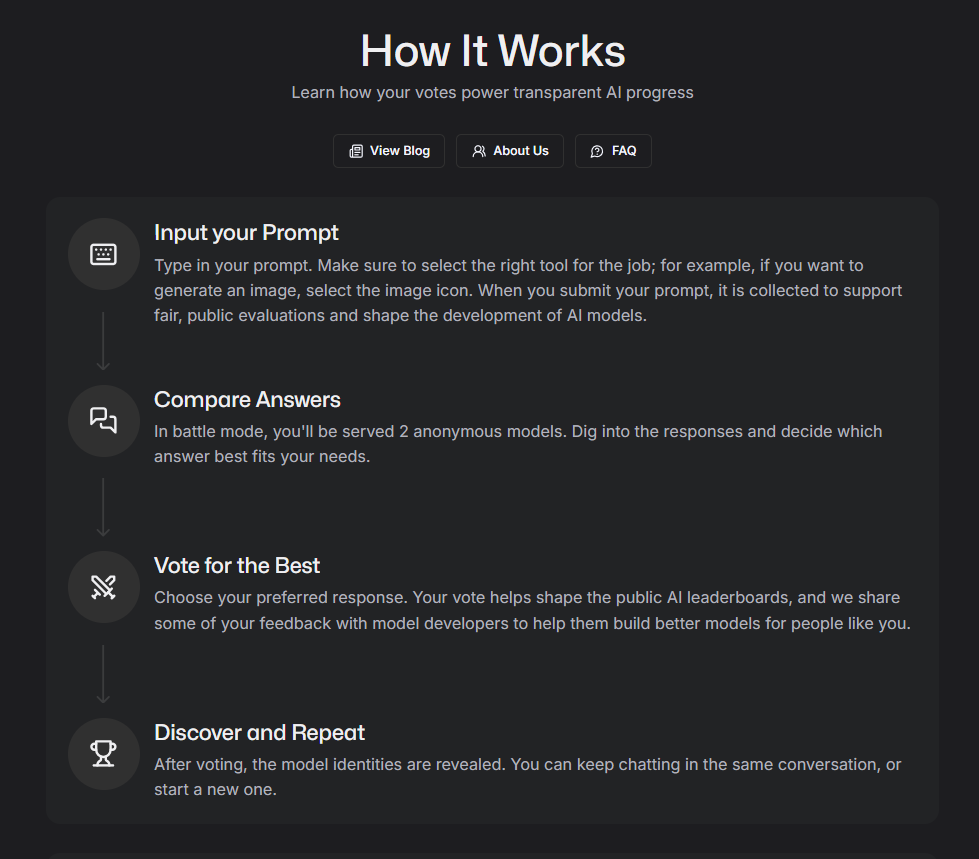

- Open LMArena and select multiple conversational AI models.

- Input the same customer queries such as:

“What is your return policy?” or “Can I change my delivery address?” - Run the prompts across each model simultaneously.

- Compare outputs based on:

- Response accuracy

- Tone and clarity

- Speed of response

- Consistency across multiple queries

- Select the model that best aligns with the business’s requirements.

Result/Impact

- Reduces time spent testing multiple AI models manually.

- Improves confidence in selecting the right model for specific tasks.

- Ensures better user experience by choosing high performing models.

- Helps avoid costly implementation mistakes.

For businesses adopting AI, this can significantly improve both efficiency and outcomes.

Pros and Cons

Pros

- Allows direct comparison of multiple AI models side by side.

- Provides performance metrics and benchmarking insights.

- Saves time and resources in AI model selection.

- Easy to use interface with no coding required.

- Useful for both technical and non technical users.

Cons

- Focused only on evaluation and comparison, not model creation.

- Advanced analytics features may require paid access in the future.

- Limited functionality for deploying or integrating models directly.

Integration & Compatibility

LMArena is designed to work within modern AI ecosystems.

- Web based platform accessible from any browser.

- Compatible with models from platforms such as OpenAI and Hugging Face.

- Allows exporting comparison results for reporting or internal use.

- Can support workflows where evaluation is required before integration.

This makes it a useful tool for teams working across multiple AI platforms.

Support & Resources

LMArena provides several resources to help users maximise its value.

- Documentation and tutorials explaining how to run model comparisons.

- Example prompts and benchmarking scenarios.

- Community discussions where users share evaluation techniques.

- Support channels for questions related to model performance and usage.

For organisations and developers choosing between AI models, LMArena offers a practical way to compare performance, reduce uncertainty and make data driven decisions.

If you want to explore how AI can accelerate your growth, consider joining a Nimbull AI Training Day or reach out for personalised AI Consulting services.

Introduction

LMArena is an innovative platform designed to help businesses, developers, and AI enthusiasts compare and evaluate different AI models side by side. Instead of being a tool for creating AI, LMArena focuses on analysing models’ performance, accuracy, speed, and suitability for various tasks. It’s ideal for businesses, researchers, and anyone who wants to select the best AI model for their specific requirements without guessing or extensive testing.

Competitor Comparison

LMArena competes with tools like Hugging Face Spaces, Papers With Code, EvalAI, OpenAI Playground, and PromptLayer.

Pricing & User Base

At the time of writing, LMArena offers free access for basic usage, with premium features expected in future updates.

Primary Users: Businesses, AI researchers, data scientists, educators, and AI enthusiasts.

Difficulty Level

Ease of Use: Easy

LMArena provides an intuitive interface, making it straightforward to compare AI models without technical expertise.

Use Case Example

Imagine you run a small e-commerce business and want to implement AI for customer support. You are unsure which model will provide the most accurate and natural responses.

Open LMArena and select AI models designed for conversational tasks.

Run the same customer inquiry prompts, like “What is your return policy?” or “Can I change my delivery address?” across all models.

Review performance metrics such as response accuracy, speed, and tone consistency.

Choose the model that best aligns with your business needs.

This approach removes the guesswork, ensures your AI assistant is reliable, and saves time and money.

Pros and Cons

Pros

- Compare multiple AI models side by side

- Provides performance metrics and benchmarking

- Provides performance metrics and benchmarking

- Saves time and resources for AI selection

Cons

- Limited to evaluation; does not create AI models

- Some advanced analytics may require premium access in future

- Focused mainly on model comparison, not deployment

Integration & Compatibility

Web-based platform accessible from any modern browser

Compatible with AI models from popular frameworks like OpenAI, Hugging Face, and others

Allows exporting comparison results for reporting or internal use

Support & Resources

Detailed documentation and tutorials

Community forum for sharing insights and tips

Contact support for questions about model evaluation

If you want to explore how AI can accelerate your growth, consider joining a Nimbull AI Training Day or reach out for personalised AI Consulting services.